Rubin Is Here, The Real Story Is Cost per Token

Nvidia has now unveiled its new Vera Rubin chips, and as many of you know, it is a stock we follow closely.

It is one of the most important companies in the AI ecosystem, and part of what makes Nvidia so critical is its ability to keep pushing out new chip iterations that are meaningfully better each cycle. That pace of innovation matters because AI training and inference demand continues to rise, especially from hyperscalers that need more performance, better efficiency, and lower cost per workload.

Despite reporting an outstanding US$216 billion in revenue and strong growth in operating income, Nvidia shares still fell 5%. That tells you the market is not just focused on how strong the numbers are today. It is also focused on whether AI demand can keep accelerating fast enough to justify expectations.

That is why this article is not just about the share price reaction.

What we want to focus on here is everything you need to know about Nvidia’s latest Vera Rubin chip, and why the economics behind it could be so important for the next wave of AI demand.

What are the Best ASX Stocks to invest in right now?

The New AI Rack Economics

One of the most important performance metrics with these chips is performance per watt. In simple terms, that means how much compute you get for the amount of energy consumed to run AI training and model development.

That matters because AI workloads are becoming incredibly power intensive, and at scale, efficiency is everything. With Rubin, Nvidia is pushing that forward again, with the new chip offering 2x better performance than its latest Grace Blackwell platform.

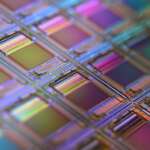

What also stands out is the sheer complexity behind it. Building a Rubin chip requires roughly 1.3 million components and more than 80 suppliers, which shows just how advanced and deeply integrated Nvidia’s supply chain has become.

For AI training and inference, one of the most important economic metrics is cost per token. A token is essentially a small unit of text that an AI model reads or generates. In production inference, and even during training, AI teams care deeply about cost per token because it directly affects what it costs to serve customers and how profitable those workloads can be.

That is what makes Rubin especially interesting. Nvidia says these new Rubin systems are 10x more cost effective per token, even though a Rubin rack is priced around 25% higher than its Blackwell predecessor. So while the upfront cost is higher, the economics can still be very attractive because the total cost of running heavy AI workloads comes down over time.

In other words, customers may pay more initially, but they can potentially get much better long-term value through stronger performance, higher throughput, and lower operating cost per unit of compute.

So how does Nvidia actually achieve this?

A Rubin AI rack is essentially a massive AI supercomputer packed into one system. Each rack contains 18 compute trays, with 2 Rubin compute chips and 1 Vera CPU in each tray, and the full rack consumes around 220kW of power.

Another key part of the system is NVLink, and this is where a lot of the real magic happens. NVLink is Nvidia’s high-speed interconnect technology that links all of these GPUs and CPUs together, allowing them to function more like one unified system rather than a collection of separate chips.

That matters because it allows data to move between processors far more quickly and efficiently. Instead of each chip working in isolation, the GPUs and CPUs can access and process data simultaneously across the rack, which dramatically increases the system’s ability to handle larger models, more complex workloads, and faster computation at scale.

The Next Compute Cycle, Rubin vs Helios

What will matter from here is whether Nvidia can maintain its pricing power as competition starts to rise, particularly with AMD bringing its Helios rival rack to market.

At the same time, the industry is still dealing with massive AI capex, ongoing memory supply constraints, and a broader infrastructure buildout that is only getting bigger.

With Apple committing US$400 billion over the next 3 to 4 years, and with US semiconductor reshoring accelerating through new Arizona fabrication plants, it will be critical to watch how the supply chain scales to meet demand.