Cerebras hits Nasdaq with a $20b OpenAI deal and wafer-scale chips

Cerebras Systems has hit the Nasdaq in one of the largest public offerings in history, pricing at $185 per share before opening at $350.

The stock climbed as high as $385 shortly after going live, before settling at $311 by the closing bell.

Investor demand was clearly intense. SoftBank and Arm Holdings were already reportedly looking at a potential acquisition of the company before the listing, which tells us the interest was not limited to public market buyers. Strategic buyers were circling as well.

Cerebras has also signed a major strategic deal with OpenAI, with a multi-year agreement worth up to $20 billion – talk about perfect timing!

Investors may be wondering why the market is so excited. Yes, Cerebras sits inside the AI infrastructure boom, but the story runs deeper than simply having AI exposure.

The more interesting part is the architecture behind the systems it sells. Cerebras is not just trying to build another AI chip. It is taking a very different approach to how AI compute should be designed, scaled and delivered to customers.

What the company does

For newer investors, Cerebras designs and sells AI compute infrastructure. In simple terms, it builds the chips and systems used to train AI models and run inference.

The key difference is its architecture.

Instead of taking a silicon wafer and cutting it into hundreds or thousands of smaller chips, Cerebras builds one massive chip that uses almost the entire wafer. This is known as a wafer-scale architecture, and it is a very different approach to traditional chip design.

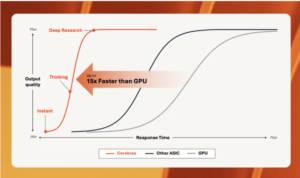

In a normal AI system, data constantly moves between compute and memory. That back-and-forth creates latency, uses power, and can limit performance, especially as AI models become larger and more complex.

Cerebras is trying to solve that bottleneck by keeping more of the system on one giant chip. Compute and memory sit much closer together, so data does not need to travel as far between separate chips and components.

The company argues this makes the process significantly faster and more efficient, which is the performance advantage shown in the chart.

Cerebras business model

Cerebras currently has three core products, but hardware sales still make up the majority of the business.

The main revenue driver is its CS-3 systems and multi-system clusters, which are sold to customers that want to own their AI infrastructure outright. In 2025, this segment generated around $358 million of Cerebras’ total $510 million in revenue.

The second piece is cloud and services, which is more focused on hyperscalers and developers running inference.

Once a customer has a working AI model, the next challenge is running that model at the lowest possible cost. Cerebras lets developers run models like Llama on its hardware through an API, where they pay per token.

This puts Cerebras directly up against inference providers such as AWS, Groq and Together AI.

In 2025, this cloud and services segment generated around $152 million in revenue.

The risk you should not miss

Investors will naturally get caught up in the hype around a new AI name coming to market. After most IPOs, we often see a sharp pullback once the early excitement fades.

The real risk with Cerebras right now is customer concentration.

In 2025, 86% of the company’s $510 million in revenue came from just two UAE-affiliated entities. MBZUAI contributed 62%, while G42 accounted for 24%.

Customer diversification has likely improved since then, but investors probably need to wait until the next reporting season to see how much that concentration has actually reduced.

Until then, this remains a key risk.

If demand from one major customer slows or gets disrupted, Cerebras could be more exposed to large share price swings than a more diversified AI infrastructure company.

The other point investors need to be careful with is backlog quality.

OpenAI is involved in a growing number of circular AI infrastructure deals across the market, while also facing more competition from players like Anthropic. So when investors see more than $20 billion in reported backlog tied to OpenAI, it needs to be treated with caution.

Not every headline deal should be viewed the same way as contracted revenue.

We saw a similar issue with Nvidia’s announced OpenAI deal, which appeared to give OpenAI an option rather than a hard commitment. That distinction matters because optionality is not the same as guaranteed demand.

Investors can find more coverage of global technology and semiconductor stocks here at Stocks Down Under.